It wasn’t long ago when challenge courses, adventure parks, and zip line tours numbered only in the hundreds. Today, they’re in the thousands. That’s a lot of new businesses trying to figure it out as they mature.

To effectively manage risk, comply with industry standards, and stay competitive in the market, owners and operators must review training practices and program procedures throughout the year. This ongoing assessment of program, documents, personnel, participants, and practices is essential. In a previous edition of this publication, Charles R. (Reb) Gregg said, “… legal counsel for an injured visitor often will investigate the personal history of staff and the employee records of incidents, outside reviews, and inspections.” Reb’s statement illustrates the need for effective assessment of all areas of the business, training practices and documentation included.

The goal: year-round review. Operators sometimes overlook the importance of reviewing training practices and program procedures, not just at the start of a season, but at the end, too, while data is fresh and readily available. This is the time to make adjustments.

It doesn’t always work that way, though. Once the season starts to slow down, many managers tend to let data collection, evaluations, and document review take a back seat. Yet the end of the season is a prime time for ensuring that documents and practices align for the future. An effective assessment allows managers to identify training strengths and weaknesses, and also makes for a more effective partnership with next season’s training provider and helps develop training content that meets the specific program needs.

ANSI/ACCT changes. The new ANSI/ACCT 03-2019 Standards address the importance of effective annual training and documentation. They require operators to employ qualified persons to deliver training and establish up-to-date local operating procedures (LOPs) that match current industry practices. Owners and operators must stay current. (See ANSI/ACCT 03-2019 Chapter 2: Operational Standards.)

How do we know if we are effective if we are not committed to assessing training documents and procedures? Does our investment in training and document upgrades result in a measurable increase in the number of visitors, less staff turnover, fewer incidents, an increase in revenue year-over-year, or an improved working environment?

BUILDING AN EVALUATION PROCESS

Staff costs are often the greatest single business expense, so it’s important not to waste time and/or money on ineffective training programs and inefficient procedures. How can we assess training effectiveness? Some of the assessment process steps are easy, some are not. Measuring a shift in number of participants, near misses, or revenues are fairly easy quantitative steps. Weighing the qualitative data gathered from participant feedback, staff evaluations, and supervisor observations may prove more difficult.

When charged with collecting this type of data, many of us feel like we are trying to measure the air temperature with a tape measure. The key is to build an evaluation process tailored to your program based on existing knowledge and resources. Data collection and information analysis about the program’s activities, characteristics, and outcomes are at the root of the process. The goal is to draw conclusions about the program, improve overall effectiveness, and formulate informed program decisions.

Be an explorer. It’s important to start out with the right mindset, though. Consider yourself an explorer out to discover what is actually there. Who better than you and your staff members who manage the course to provide and review this data?

But we’re not perfect, of course. Those of us not trained in data collection and interpretation tend to look only for what we want to find. Developing quality evaluation tools is a key next step, as it will take you beyond those already-charted waters.

Start at the beginning. It is important to recognize that the end-of-season evaluation process starts before your season even begins. Having data from the previous season is critical for comparison. And the tools to measure program quality and the plan to use those tools must be in place at the start of the new season. Also, performance goals and objectives must be clearly stated for all staff before any training is delivered.

It can be daunting to unpack lots of assessment data, but with preparation and some simple steps, it’ll be easier to draw insights from your data.

The following is a model I created for end-of-season training and document evaluation to help measure the data collected and better interpret the qualitative data. The steps for each are listed from easiest to most difficult, based on the effort owners/operators typically dedicate to each step in the process.

QUANTITATIVE ACTION STEPS:

1. Net Income: Compare net income this season to previous seasons. (easy)

2. Visitor Count: Compare participant numbers this season to previous seasons. You must first track visitor numbers accurately. (fairly easy)

3. Risk Analysis: Compare near misses, incidents, and accidents from this season to previous seasons. First, you must report and categorize near misses, incidents, and accidents. Near-miss identification and reporting can be a proactive approach to risk management. However, collecting near miss data and not using it to change training procedures, operational practices, and polices can carry more liability than not collecting it at all. (moderately easy)

QUALITATIVE ACTION STEPS:

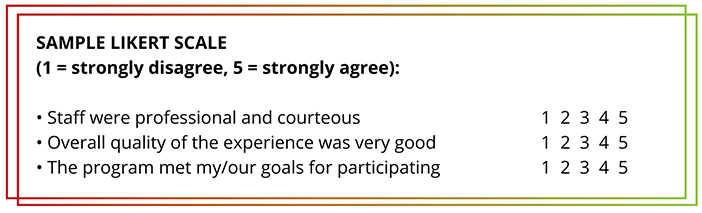

First, create and use a 4- or 5-point Likert scale format for each category that matches your evaluation questions with program goals to determine what you want to know (see sample, next page). A Likert scale includes a series of questions and a range of, say, five response choices that are each associated with a value, such as “1 = strongly disagree” to “5 = strongly agree,” or “1 = poor” to “5 = excellent.” The scale typically has a neutral midpoint. Create questions that align with your desired program outcomes.

Second, craft two or three open-ended questions to add after each Likert scale section. Open-ended questions allow you to gather information that is not typically quantifiable.

Here’s what this might look like for the various guest and staff groups:

1. Participant Feedback: Each participant completes and submits an evaluation after each program. (fairly difficult)

Some sample qualitative questions for guests are shown in the Likert scale example, above. Here are some sample open-ended questions for guests:

• What did you like the most?

• Where can we improve the most?

• What do you wish was different?

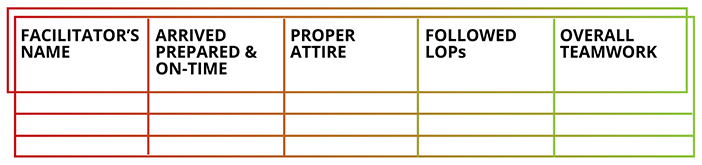

2. Staff Evaluation and Feedback: Staff are instructed to anonymously evaluate colleagues and themselves after each program session. (moderately difficult)

Sample quantitative questions for staff:

• Rate each facilitator’s performance for the day on a scale of 1 (poor) to 5 (excellent) on the chart (see example, below).

Sample qualitative questions for staff:

• What is one thing that was confusing or inconsistent when implementing our LOPs?

• In terms of staff skills, execution, and risk management, what is a strength of our team?

• What is an area that needs the most attention or improvement?

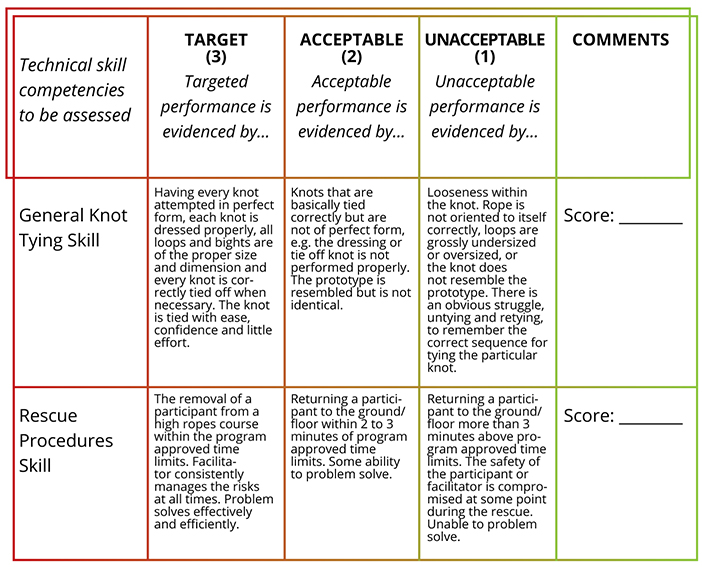

Third, craft a supervisory tool. Supervisors can use a staff observation and evaluation tool to measure the performance, behaviors, and/or competencies staff must exhibit. This can be objective, subjective, or a combination of the two. The aim is to see if staff can show or demonstrate certain skill competencies or knowledge, not to provide a written grade.

3. Supervisor Observations and Evaluations: For this to be valid, the supervisor must regularly go into the field and actively observe staff in similar program situations. (more difficult)

Note: Training evaluators (i.e., supervisors) must have a set of standard evaluation protocols to guide their work. Protocols provide a means for consistent evaluation each time staff are observed.

Sample qualitative observations:

• Guide used PPE appropriately.

• Staff member conducted an accurate group orientation.

• Staff member provided adequate supervision for all participants in their area of responsibility at all times.

Supervisors can gather valuable insights by observing staff while out in the field, and evaluating what they see using standard evaluation protocols.

PUT IT ALL TOGETHER

At the end of the season, take all of the data you’ve collected and compile it to create the “End-of-Season Assessment Summary.” This will take some work, but it’s well worth the effort. Follow these steps to turn your data into a useful summary:

1. Collate the Likert scale data section from each assessment tool.

2. Quantify. To draw conclusions from qualitative data—which includes responses to open-ended questions—you must quantify it by turning the data from words or images into numbers.

Here’s how:

• Review the responses to open-ended questions and look for emerging patterns.

• For each question, place responses that are similar and fit into an emerging pattern together into categories or “buckets.” Ensure that everything in each “bucket” is related in some meaningful way.

• Two or three “buckets” should stand out after reviewing most of the responses.

• Count the occurrences in each bucket, and figure the rate of occurence as a percentage of all comments. For example, one of the “buckets” contains comments that indicate that not enough time is spent at ground school for participants to be comfortable performing skills at height. These comments show up 17 times out of 100 participants. This indicates that about 17 percent of the time, staff is conducting the ground school too hastily for the guests. They may be leaving out steps, not providing enough information for participants, or moving through the steps too quickly for the individual or group.

3. Compare the participant, staff, and supervisors data to identify common strengths or concerns. This triangulation of data reveals where training is effective, what program policies and procedures are working, and what areas need attention.

4. Make changes. Use the results to inform training upgrades, document revisions, and make the appropriate adjustments to marketing tools, policies, and/or procedures. Engage staff and training providers in this step. This demonstrates that supervisors value staff input. The result can be increased staff buy-in, improved morale, and reduced staff turnover.

FIND A GOOD PARTNER

You may well want to enlist a third-party qualified professional to assist with all this, and to get certifications for some staff. In addition, hold an in-house LOP orientation, delivered by a qualified person, for site-specific operational procedures. It’s important to maintain written LOPs, and keep records of staff skills verification, evidence of efforts to stay up-to-date with current industry practices, procedure assessments, and any implemented changes.

Owners and operators should expect that third-party training providers are abreast of current industry standards and practices. However, a trainer cannot address specific training needs unless he or she knows where the needs lie. The end-of-the-year training assessment, training documents review, and an external program review help identify those needs.

END-OF-SEASON EFFORTS ARE KEY

The aerial adventure park, zip line canopy tour, and challenge course market finds itself in a period of enormous growth. In an era of increased competition, amplified public scrutiny, social media, regulation, and, yes, litigation, owners and operators must be more intentional about how decisions are made. Implementing end-of-season data-driven efforts that methodically evaluate programs, documents, personnel, participants, and practices are crucial to growth and sustainability.

End-of-season training review findings will lead to continuous improvement.